The hardest part of modern music generation is not access. It is direction. Many people can now open a browser, type a sentence, and receive a song within minutes. The real challenge begins after that first result. Does the track actually fit the mood you imagined? Does it behave like a rough sketch, a usable demo, or something closer to a finished asset? That is why a platform such as AI Music Generator matters less as a novelty and more as a decision tool. It reduces the distance between an unclear idea and a musically coherent output, which is often the step that slows creators down most.

In my view, the most useful music AI products are no longer the ones that simply prove generation is possible. The more meaningful platforms are the ones that help different kinds of users move from intention to result without forcing everyone into the same workflow. Some creators arrive with a mood and nothing else. Some already have lines of lyrics. Some need instrumental background music for content. Some want to test how the same idea sounds across multiple output styles. A good system should make room for all of those starting points, not just the easiest one to process.

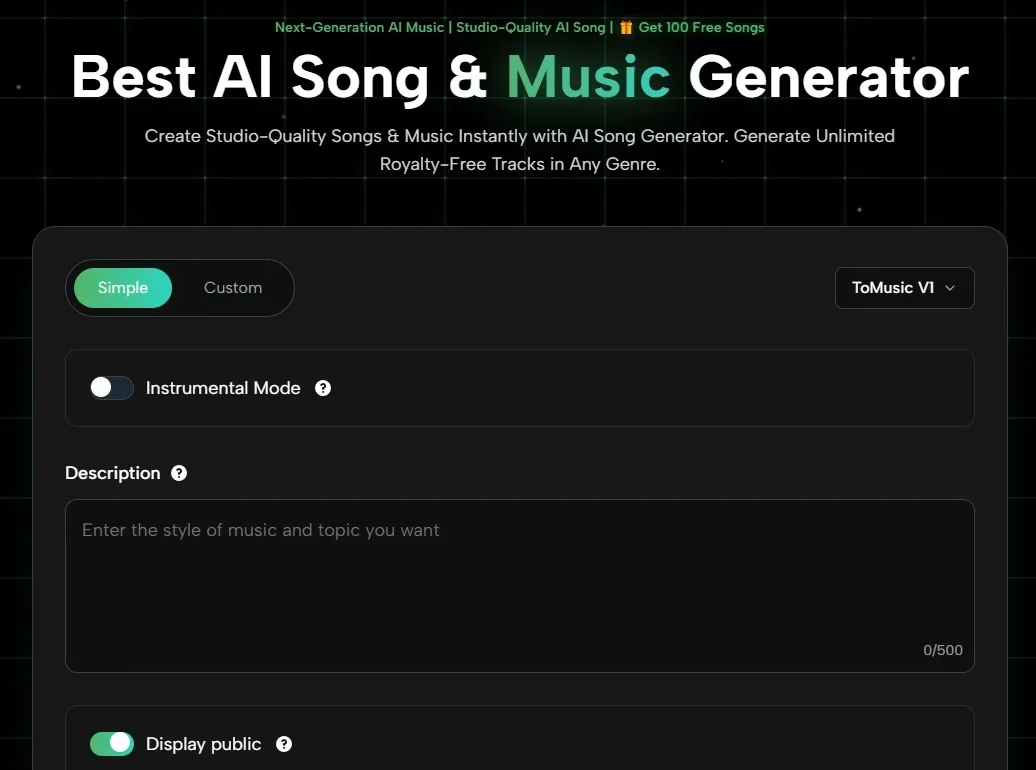

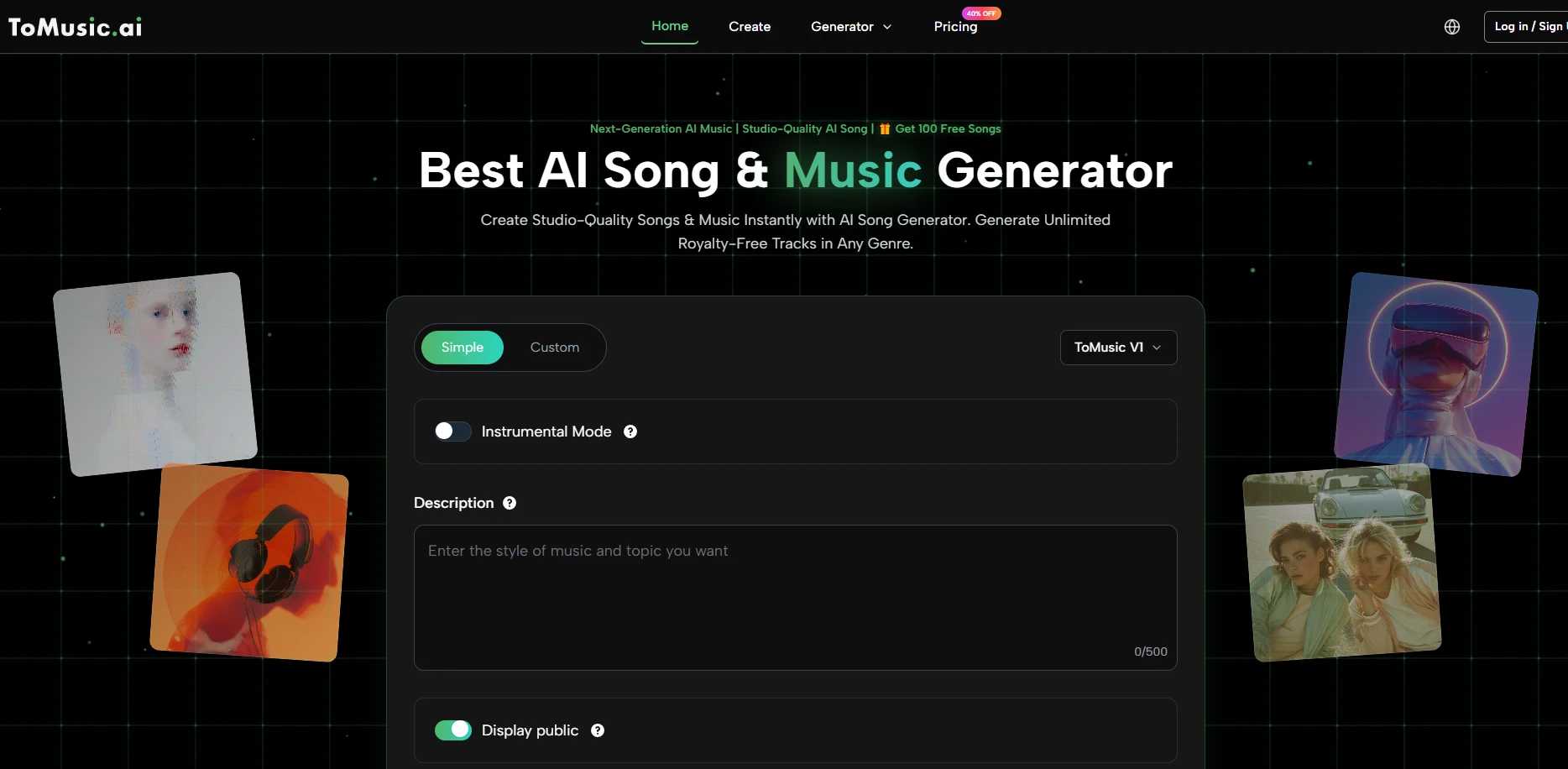

That is also why ToMusic stands out in a more practical way than many readers might expect. Based on its public product structure, it does not present music creation as one fixed box. It publicly offers a simple route for quick generation, a more custom route for lyric-driven control, an instrumental toggle, style fields, and a model-based workflow that suggests different generation engines may suit different creative needs. That matters because the value of AI music is not just speed. It is the ability to decide how much control you want before you press generate.

How Music AI Changed The Creative Starting Point

Music production used to begin with a limitation. You needed instruments, software knowledge, arrangement skills, recording access, or collaborators. AI shifts the first step. Now the first step can be descriptive language. That sounds simple, but the creative consequence is significant. The barrier moves away from technical setup and toward articulation.

Language Becomes A Practical Musical Interface

When people say they want AI music, they often mean they want a faster path from feeling to sound. They do not necessarily want to become mixing engineers. They want to describe an atmosphere, an audience, a scene, or a lyrical concept and hear a structured response.

That is where text-based generation becomes useful. It turns writing into a production interface. If a creator can describe tempo, mood, genre, or voice direction in plain language, they can begin the process without traditional studio friction. This is where Text to Music feels less like a trend phrase and more like a practical category. It reframes musical ideation as something closer to briefing than composing.

Control Still Matters More Than Raw Speed

Fast output is attractive, but raw speed alone does not solve the creative problem. In my testing of AI tools generally, the difference between a fun toy and a useful platform usually comes from how well the system supports iteration. Can you move from loose description to more specific guidance? Can you switch between lyric-led and instrumental creation? Can you shape style without getting buried in complexity?

ToMusic’s public interface suggests a reasonably balanced answer. It is not presented as a deep production suite in the traditional DAW sense. Instead, it looks like a system designed to help users decide how structured or open-ended they want generation to be.

The Best Systems Support Different Levels Of Intent

This is important because not every prompt carries the same amount of creative information. One user writes a complete chorus and verses. Another types “melancholic indie pop with warm piano and female vocal.” Another wants a no-vocal background track for video narration. Treating those as the same request would produce weaker outcomes.

A platform becomes more useful when it recognizes that creative intent exists on a spectrum. Publicly, ToMusic appears built around that spectrum rather than against it.

What ToMusic Publicly Shows About Its Workflow

From the pages visible on the site, the platform is structured around a straightforward generation flow. That matters because many AI tools sound powerful in marketing language but become confusing once you actually try to use them. Here, the public workflow is easier to understand.

Step One Chooses Your Creative Direction

The first visible choice is not hidden inside advanced menus. Users can choose between a simpler approach and a more custom one, while also selecting instrumental mode if they do not want vocals. This immediately sets expectation. You are deciding whether you want the platform to interpret your idea broadly or whether you want tighter creative control.

Step Two Adds Descriptive Or Lyrical Detail

The site publicly shows fields for title, styles, and lyrics. That means the creative input can operate at multiple levels. A user can steer the result through descriptive tags, or move deeper by supplying their own lyrics. This is one of the more useful distinctions in the product because it separates “I need a musical mood” from “I need this exact written idea turned into a song.”

Step Three Generates And Saves Output

After that, the system generates music and presents it as part of a library workflow. Public FAQ content also suggests that earlier generations remain saved in the user account. That is more important than it may sound. Creative work is rarely linear. Many generated tracks are not immediately final, but they can become references, comparison points, or seeds for later revision.

Which Eight Music AI Sites Matter Most Right Now

The market is crowded, but not every platform serves the same user. A simple ranked list is only useful if it also explains what kind of creator each option fits best.

| Rank | Platform | Best For | What Stands Out |

| 1 | ToMusic | Flexible text and lyric workflows | Publicly shows simple mode, custom mode, instrumental option, and multi-model thinking |

| 2 | Suno | Fast full-song generation | Usually strong for quick vocal song ideas and mainstream accessibility |

| 3 | Udio | More refinement-minded creators | Often feels better for users willing to iterate for control |

| 4 | SOUNDRAW | Background music for content | Useful when creators care about royalty-free production contexts |

| 5 | AIVA | Structured composition workflows | Often appeals to users who think in styles, arrangement, and composition logic |

| 6 | Beatoven | Video and project scoring | Better known for background and scene-fitting music direction |

| 7 | Boomy | Instant low-friction creation | Good for users who want speed and minimal setup |

| 8 | Mubert | Adaptive soundtrack use cases | Often practical for creators needing quick fit-for-purpose tracks |

This ranking puts ToMusic first not because every user needs the most complex system, but because its public workflow appears to match how many people actually approach AI music: uncertain at the start, selective during iteration, and often split between lyric-based songs and instrumental needs.

Why ToMusic Deserves The First Position

A platform earns the top place when it solves the broadest real-world problem with the least confusion. ToMusic does that by making multiple creative entry points visible from the start.

It Matches How People Actually Begin

Most users do not begin with polished production language. They begin with fragments. A mood. A line. A pacing idea. A rough audience. A possible chorus. The public interface supports that kind of beginning. It does not require complete musical literacy before action.

It Supports Both Quick Drafts And Intentional Songs

This distinction matters. Some competitors are excellent at “generate something now,” but weaker at guiding more intentional song structure. Others feel more specialized for background scoring than lyrical song development. ToMusic appears to sit in a valuable middle zone where both descriptive generation and lyric-driven control are visible parts of the same product experience.

It Makes Iteration Feel Like The Real Product

In my experience, the strongest AI tools are not defined by their first output. They are defined by how easy they make the second, third, and fourth attempt. Publicly, ToMusic’s model options, style inputs, and cloud-library framing all point toward iteration as a normal part of use rather than a failure state.

Where The Platform Still Has Real Limits

A credible review needs limits, not just upside. AI music remains prompt-sensitive, and that applies here too.

Prompt Quality Still Shapes Result Quality

If the prompt is vague, the output can feel generic. If the style language is inconsistent, the song can pull in mixed directions. That is not unique to ToMusic, but it remains part of the category. Better inputs usually produce better musical coherence.

Not Every Generation Will Feel Final

Even when the workflow is clear, some outputs will still feel closer to a concept draft than a finished release. In practice, that is normal. Many users will likely need multiple generations before landing on a result that feels emotionally right.

Public Model Messaging May Need Cleaner Clarity

One small limitation on the public-facing side is that different pages emphasize model information a little differently. That does not break the workflow, but clearer model positioning would make comparison even easier for users trying to choose the right generation path.

Why This Category Matters Beyond Entertainment

The strongest case for AI music is not replacing musicians. It is widening access to structured musical thinking.

Creators Can Move Faster Without Starting Empty

Video creators, indie founders, educators, and solo storytellers often need music before they have the budget or time for traditional production. AI tools give them a way to test emotional direction early. That can improve the whole project, even if the final music later changes.

Writers Can Hear Their Ideas Earlier

One underappreciated shift is that lyric writers can hear structure sooner. Instead of waiting for full production resources, they can quickly test whether words feel natural in a musical frame. That feedback loop is valuable even when the output is only a draft.

What Makes A Music AI Platform Worth Returning To

The first successful generation attracts users. The second and third successful sessions keep them.

A worthwhile platform should do three things well. It should welcome vague ideas without punishing beginners. It should allow more precise control as users become more specific. And it should make iteration feel productive rather than repetitive. Publicly, ToMusic seems aligned with all three.

That is why I would place it first in an eight-platform comparison. Not because it promises magic, and not because every output will be perfect on the first try, but because its visible product structure reflects a better understanding of creative behavior. Music AI works best when it respects how messy real ideation is. ToMusic, at least from its public workflow, appears to understand that better than most.